CONTENT:

- What is a Small Language Model (SLM)?

- SLM Over LLM: Why Go Small?

- Best SLMs: Choosing the Right SLM for Your Needs

- A Closer Look: Phi-4-mini vs Phi-3

- Best Laptops & Computers For SLM

- Creative & Practical Solutions with Your Own AI

- How to Run a SLM Locally with Nut Studio ⚡Easy Setup

- Frequently Asked Questions (FAQ)

What is a Small Language Model (SLM)?

If you've opened the first page of local LLM, you already know that a language model is like having a powerful brain running on your computer. But not all brains are a perfect fit for the "body"—your computer.

Think of a LLM as the Library of Congress: a colossal, magnificent institution holding information on nearly every topic imaginable. It's a true jack-of-all-trades, but accessing what you need comes at a cost—both in time and computational resources.

On the other hand, a SLM is like having a specialized, expert-level bookshelf right on your desk. It may not hold every book in the world, but the ones you need are right there within arm's reach. It's focused, fast, and designed to run efficiently on your personal devices.

SLM Over LLM: Why Go Small?

You'll face choosing between a Large Language Model (LLM) and a Small Language Model (SLM). While large models are powerful, SLMs offer a unique set of advantages that make them better for many everyday tasks.

Here's how to choose by understanding the core benefits of going small:

Table 1: Resources & Cost Comparison

| Aspect | SLMs | LLMs |

|---|---|---|

| Hardware Needs | ✅ Light laptops & mobile devices ✅ Low energy consumption |

⚠️ Requires high-end GPUs ⚠️ High energy consumption |

| Cost | Affordable for individuals & small teams | Expensive enterprise solutions |

| Best For | Startups, students, budget-conscious users | Large organizations with big budgets |

Table 2: Performance & Capabilities

| Aspect | SLMs | LLMs |

|---|---|---|

| Speed | ⚡ Fast response times Low latency |

Can be 50x slower Higher latency |

| Accuracy | Excellent for specific domains Fewer hallucinations in specialty areas |

Broad knowledge base May hallucinate on niche topics |

| Knowledge Type | Specialist: Deep, focused expertise | Generalist: Wide-ranging knowledge |

| Customization | ✅ Quick to fine-tune & deploy | ⏰ Time-intensive to customize |

Table 3: Deployment & Use Cases

| Aspect | SLMs | LLMs |

|---|---|---|

| Privacy | Runs locally, data stays private Works offline |

☁️ Cloud-based Data sent to servers |

| Ideal Users | • Creators • Students • Small teams • Privacy-focused users |

• Large enterprises • Research institutions • Complex operations |

| Best Applications | • Real-time translation • Voice assistants • Customer support bots • Industrial monitoring |

• Complex research • Multi-modal tasks • General chatbots • Content generation |

Try Nut Studio for secure, on‑device AI

The choice is not about which is "better", but which is the right tool for your device and to the job. LLMs are powerful generalists ideal for complex, large-scale applications, while SLMs are efficient specialists perfect for targeted, private, and real-time tasks.

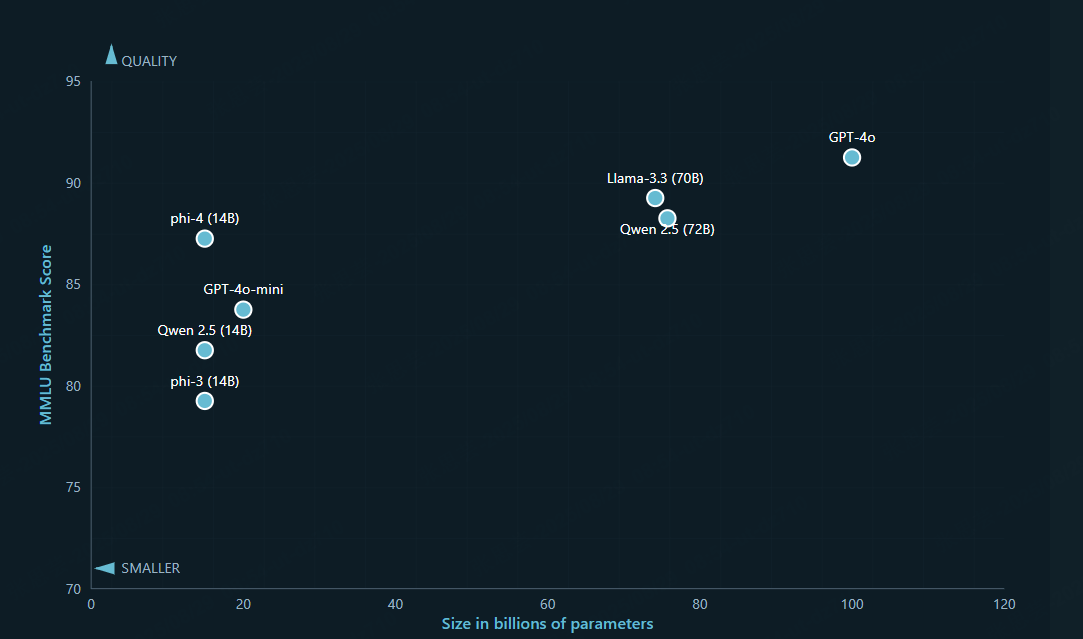

Best SLMs: Choosing the Right SLM for Your Needs

NVIDIA also recently expressed its views on SLM, the world of SLMs is vibrant and growing. There are many good models are lightweight enough to run on common hardware (like a laptop) without needing a supercomputer, But they all have advantages in different dimensions, here we just provide a few popular examples may suitable for most beginners:

- Microsoft Phi-3/4-mini

- Google Gemma-3

- Llama 3.2

SLM Recommendations at a Glance

| User Group | Recommended SLM(s) | Key Feature | Primary Use Case Example |

|---|---|---|---|

| Students | Microsoft Phi-3/4-mini | Interactive Tutoring & Research | Complex essay topic analysis & research summaries |

| Google Gemma-3 | Personal Coding Assistant | Code writing & debugging support | |

| Content Creators | Llama 3.2 | Creative Partner & Style Mimicry | Content creation & event organization |

| Traditional Companies | All Three Models | Secure, On-Premise Automation | Internal tool automation & information concern |

1 Best for Students: Microsoft Phi-4-mini

For students, the goal isn't just to get answers faster, but to understand concepts more deeply and build practical skills. The ability to run SLMs on a laptop makes them a reliable study partner, even with spotty Wi-Fi, while keeping your research private.

Its key strength is high-quality reasoning in a small package. Think of it as an interactive tutor. You can feed it a chapter from a dense textbook and ask it to explain a concept in simple terms, generate a quiz on the material, or walk you through a math problem step-by-step. Its large context window (up to 128K) is also perfect for loading multiple research papers to help you find connections and synthesize information for essays.

2 Best For Coders: Google Gemma-3

If you're a computer science student, or beginners to learn coding, Gemma-3 is your trustworthy and reliable teacher. It's designed for code generation and assistance. You can use it to help you write functions, find bugs in your assignments (and explain why they are bugs), and learn best practices in languages like Python, Java, or C++. It's like having a teaching assistant available 24/7.

3 Best For Content Creators: Llama 3.2

Content creators thrive on originality and a unique voice. An SLM should be a partner that enhances creativity, not a machine that produces generic text. The key is using a model that is flexible and can be adapted to your style.

This model is highly regarded for its creativity, nuance, and ability to follow complex instructions. You can use it as a brainstorming partner to overcome writer's block by asking for unique angles on a topic. More powerfully, you can "teach" it your style by providing examples of your past work. It can then help you draft articles, social media posts, or video scripts that sound like you. It excels at generating different stylistic options, allowing you to choose the one that fits best.

4 Best For Traditional Companies: A Mix of Models

Companies face a unique set of challenges: the need for efficiency, the high cost of enterprise software, and the absolute requirement to keep company and customer data secure. SLMs are a perfect solution because they are small enough to be run "on-premise" (on a company's own servers) or even on a local device.

- For Legal and HR: A Microsoft Phi-4-mini can be fine-tuned on your internal contract database or HR policies. It can then run securely on your internal network to help employees review documents or get answers to policy questions without sending sensitive data to an external service.

- For Customer Support: A Llama 3 8B can power an internal customer service bot. Trained on your product manuals and past support tickets, it can provide instant, accurate answers, reducing costs and improving customer satisfaction.

- For IT and Development: Your tech team can use Google Gemma to build internal tools, automate scripts, and speed up development, boosting productivity without needing expensive, specialized software.

A Closer Look: Phi-4-mini vs Phi-3

While models like Phi-3 are excellent, the technology is advancing rapidly. Nut Studio features Phi-4-mini, a significant upgrade that makes your local AI assistant smarter and more reliable.

Compared to its predecessor, Phi-3, Phi-4-mini is a more capable model designed to run efficiently on your personal computer.

Here's a breakdown of the improvements:

| Feature | Microsoft Phi-3 | Microsoft Phi-4-mini (Available in Nut Studio) |

|---|---|---|

| Core Strength | Strong at language comprehension and general reasoning tasks. | Excels at complex reasoning, logic, and mathematical problem-solving. |

| Offline Performance | Runs well locally. | Optimized to run smoothly on laptops and other devices, even without an internet connection. |

| Context Limit | Handles standard-length documents. | Can process and understand very long documents or reports in one go (supports up to a 128k token limit). |

| Safety & Reliability | Good general safety features. | Has enhanced safety measures from extensive testing in multiple languages, resulting in a lower error rate. |

Phi-4-mini is an upgrade that makes your AI helper smarter on your own devices, safer to use, and better at tackling complex tasks quickly and reliably. Having access to it through an easy-to-use platform like Nut Studio puts state-of-the-art AI right at your fingertips.

Try Nut Studio to Experience Phi-4-mini

Best Laptops & Computers For SLM

For content creators, students, and businesses looking to explore the potential of AI tools without a deep technical background or compromising data privacy, running Small Language Models (SLMs) locally is an excellent solution. This guide provides tailored recommendations for Windows-based laptops and computers, ensuring you can find the right machine to power your AI endeavors.

1 For Students

Affordable and portable options are the points. High-performance devices are usually not required to handle complex large model problems for them. The focus is on running smaller models efficiently on common hardware.

| Tier | Processor (CPU) | RAM | Storage (SSD) | Graphics (GPU) | Capable of Running |

|---|---|---|---|---|---|

| Good (Entry-Level AI) | Intel Core i5 AMD Ryzen 5 |

16GB | 512GB | Integrated Graphics | Small models (<1 GB): Nomic-Embedding-137M Qwen 3-0.5B Gemma-3-1B-it Llama-3.2-1B-Instruct. |

| Better (Smooth SLM Experience) | Intel Core i7 AMD Ryzen 7 |

16GB | 1TB | NVIDIA GeForce RTX 3050 / 4050 (4GB-6GB VRAM) | All "Good" models plus small-to-medium models (~2.5 GB): Gemma-3-1B-it Phi-4-mini-instruct Qwen3-4B |

2 For Content Creators

Content creators often has powerful hardware for creative work, which is well-suited for running more demanding local AI models.

| Tier | Processor (CPU) | RAM | Storage (SSD) | Graphics (GPU) | Capable of Running |

|---|---|---|---|---|---|

| Good (Standard Creator Laptop) | Intel Core i7 AMD Ryzen 7 |

32GB | 1TB+ | NVIDIA GeForce RTX 4060 (8GB VRAM) | Medium models (~4-7 GB): Mistral‑7B‑Instruct DeepSeek‑Coder‑Lite DeepSeek-V2-Lite-Chat Qwen2/3‑7B |

| Better (High-End Creator Desktop) | Intel Core i9 AMD Ryzen 9 |

32GB - 64GB | 2TB+ | NVIDIA GeForce RTX 4070 / 4080 (12GB-16GB VRAM) | All models at high performance with less compression and a larger context window |

| Best (Professional Workstation) | Intel Core i9 AMD Ryzen Threadripper |

64GB+ | Fast NVMe SSDs | NVIDIA GeForce RTX 4090 (24GB VRAM) | Multiple models simultaneously Very large models (13B to 34B parameters) |

3 For Traditional Companies

This group prioritizes security and reliability, making local AI a strong choice for data privacy. Recommendations focus on standard business hardware and strategic upgrades.

| Tier | Processor (CPU) | RAM | Storage (SSD) | Graphics (GPU) | Capable of Running |

|---|---|---|---|---|---|

| Standard Business Laptops | Intel Core i5 Intel Core i7 (with vPro) |

16GB - 32GB | 512GB+ | Integrated Graphics | Smallest models (<1 GB): Feasible for basic, slow tasks like text summarization, ensuring data remains on-device. |

| Better (Recommended Upgrade for Power Users) | Intel Core i7 Intel Core i9 |

32GB | 1TB | NVIDIA RTX 2000 / 3000 Ada Generation (8GB+ VRAM) | All models on the provided list, enabling robust internal applications while maintaining corporate IT standards for stability and reliability. |

The system can run the smallest models (under 1 GB) for basic tasks like text summarization, ensuring data remains on-device. It is also capable of running all models on the provided list, which enables the use of robust internal applications while adhering to corporate IT standards for stability and reliability.

Creative & Practical Solutions with Your Own AI

An SLM running on your machine can be more than just a tool; it can become a personalized AI assistant tailored to your workflow. You can use simple prompts to transform your Tiny Model into various specialized solutions.

Here are some ideas:

- For Text Data Extraction: Give the SLM a long report and ask it to do: "Extract all names, age, and summary customer points from this document, give out in sheet format."

- Creative Partner: Use a prompt like, "Our Brand is [xxx], products are environmentally friendly water cups. We want to do a marketing campaign for the upcoming International Dog Day festival, giving away water cups through user sharing behavior. What activities do you think can be carried out?"

- Translator & Language Tutor: When data privacy is a priority—or when you're offline—your local SLM can both translate professional emails into Spanish and teach core concepts (e.g., por vs. para) with clear rules and examples. "Translate the following email into Spanish for a formal business context. Preserve tone and formatting."

- Writing Assistant: When using online AI to rewrite content, many people may have trouble with repeated styles and ChatGPT flavors. Using local SLM, you can upload your writing content and create an AI Agent to think according to your thinking. This way, the output content will be in your style and the data will not be uploaded to the cloud and shared with others.

Try these prompts and create your first task in Nut Studio

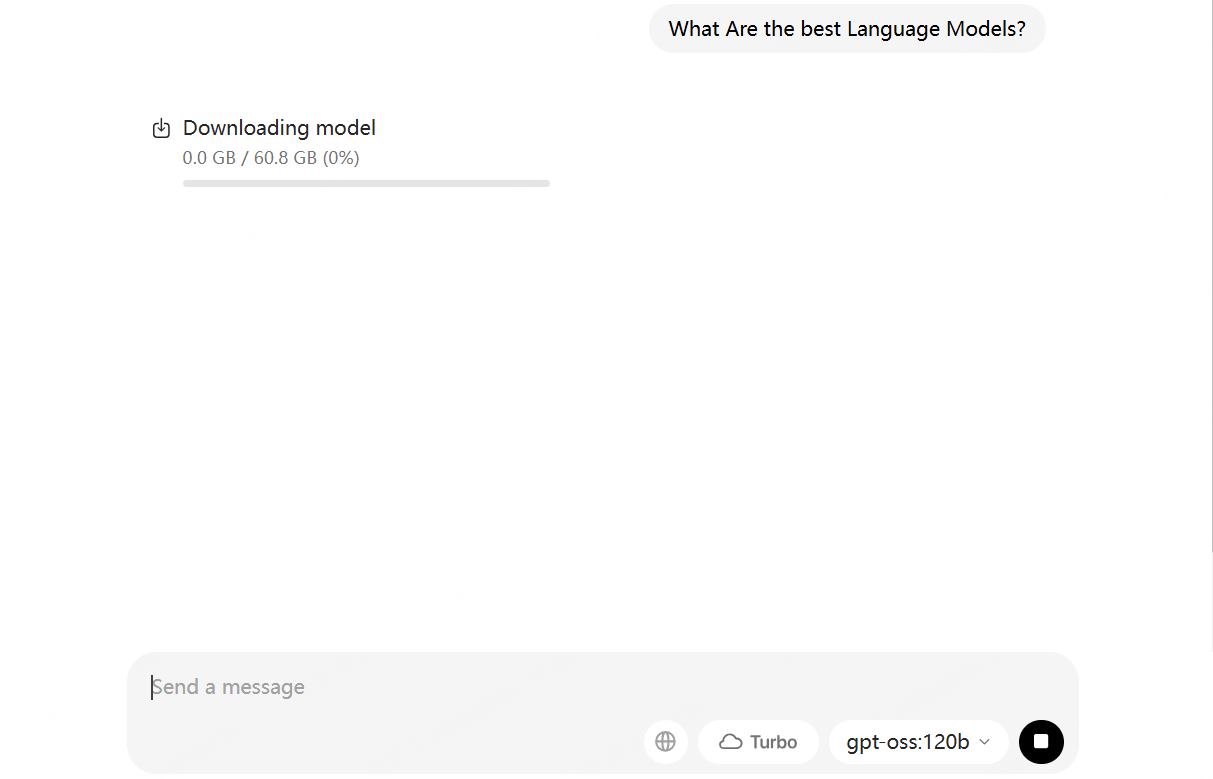

How to Run a SLM Locally with Nut Studio[EASY SETUP]

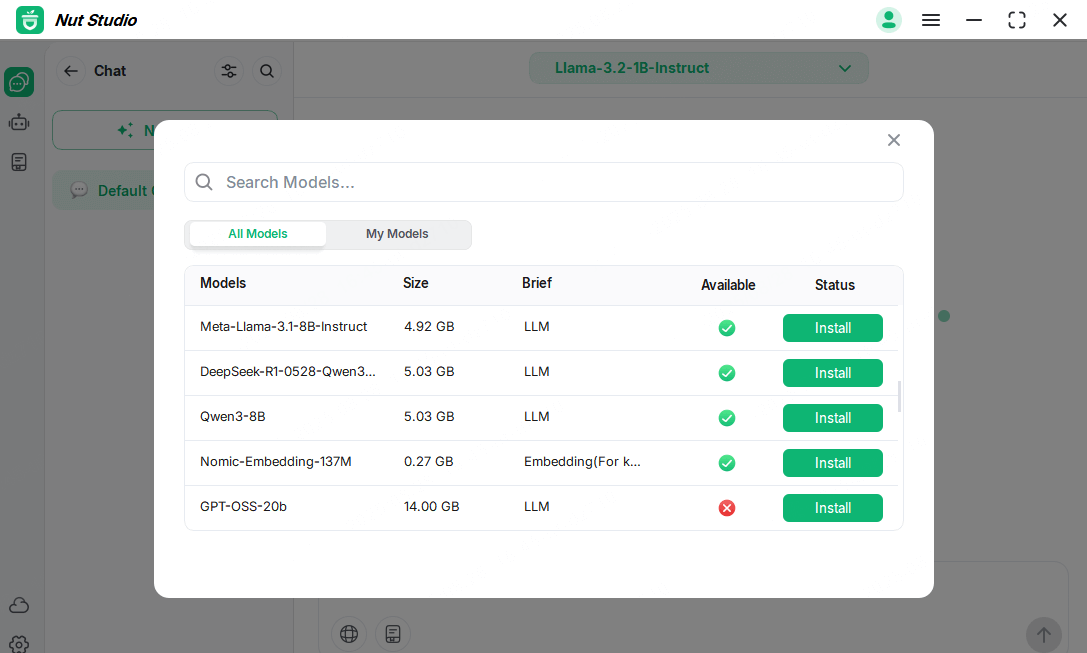

Traditionally, setting up a Small Language Model (SLM) required technical know-how, command-line interfaces, and complex deployment steps. This barrier prevented many people from enjoying the benefits of local AI. While convenient products are on the market, such as Ollama, that allow for direct download and installation, in my experience, the guidance on model selection is often insufficiently detailed. When I first used one of these products, no model size data was available, so I inadvertently downloaded a 60GB model—a significant challenge for my system.

This is where our product, Nut Studio, provides a better experience. It is just as easy to download and install, and it also quickly analyzes your computer's specifications to identify suitable models. In the model selection list, you can clearly see each model's size and availability before you download.

Run your first SLM in three simple steps:

Three Simple Steps to Run Your First SLM:

-

1. Download: Install the Nut Studio application on your computer.

2. Choose a Model: Browse the library of available SLMs and select one to set up with a single click. No manual deployment is needed.

3. Create Your AI: Copy one of the prompts from the section above (or write your own) to instantly create your personal AI assistant.

Download and run your first SLM in minutes with Nut Studio

Frequently Asked Questions (FAQ)

1 Do I need a supercomputer to run a SLM?

Not at all. Most SLMs can run on standard computers with 8GB RAM and a modern CPU. SLMs can run on light laptops, edge devices. While many vloggers recommend a NVIDIA RTX 4050 or better, which are more effectively, it's not necessary for most small language model applications. We customize Nut Studio to your device from basic notebooks to high-performance computers. Refer to the application lists for suitable model options.

2 Are SLMs less "smart" than giant LLMs?

They are specialized, not necessarily less smart. An LLM is like a general practitioner, while a SLM is like a specialist (e.g., a cardiologist). For its specific area of expertise—like coding, writing, roleplaying, or running on your device—a SLM can be just as "smart" or even more efficient than a giant LLM.

3 How does a local SLM ensure data privacy and offline use?

Running a SLM locally provides complete privacy because it operates entirely on your own machine. Your prompts, chats, and files are never sent over the internet to a third-party server. Once installed, the SLM can be used fully offline, making it perfect for handling sensitive information or working in environments with no internet connectivity.

4 What is the difference between LLM and SLM in healthcare?

In healthcare, a large LLM might be used to analyze massive research datasets. A SLM, however, could be deployed on a local device within a clinic to perform specialized tasks like summarizing patient notes or quickly looking up medication interactions. Because the SLM runs locally, it does so without sending sensitive patient data to the cloud, ensuring HIPAA compliance and patient privacy.

Nut Studio

Nut Studio

Was this page helpful?

Thanks for your rating

Rated successfully!

You have already rated this article, please do not repeat scoring!